Sweating those Assets

Part of our CTO Series of articles aimed at technology decision makers..

IT hardware and software is expensive. Naturally we want to maximise the return we make on that investment. One of the ways we can do this is to keep it in production for as many years as possible. But the $64,000 question is for how long? There will come a point when - much like any other machine - that it makes economic sense to buy a new one. Usually this is when the cost of repairs and maintenance are beginning to rise, or the machine is no longer able to meet them demands placed upon it. For something like a car or a machine in a factory this may be easy to determine, but for IT hardware or software this can be a little harder to work out.

We recently took a call from a Sydney based business a few months ago that had suffered a system failure and were struggling to get it back up and running again. While the system was no longer used for production, it was used for searching up old sales invoices and producing all manner of reports for the auditors when needed. So while it wasn't impacting the day-to-day running of the business it was still an extremely important system, and one that needed to be recovered. To help return the system to service we needed to learn as much as we could about it: the hardware it was running on, the operating systems and software applications it was runnning and interacting with, and so on. It soon emerged that the hardware was approx 10 years old and the O/S and underlying database software was nearer 20 years old!

No one really had much idea about how it worked or how it was configured.All we could glean was that there had been some sort of glitch and the system had crashed, and no one knew how to get it running again. Sound familiar? From the get go it was clear we were dealing with a number of issues:

1) Old compute hardware with no warranty or support and maintenace agreement with the manufacturer

2) Old software, with no support or maintenance agreement

3) A lack of documentation and knowledge internally on how the system was configured

We knew we'd have to start from the ground up and work out what the problem was along the way, and to ensure we produced documentation as we went to help the internal IT support guys to deal with any issues which may arise again (more on this later).

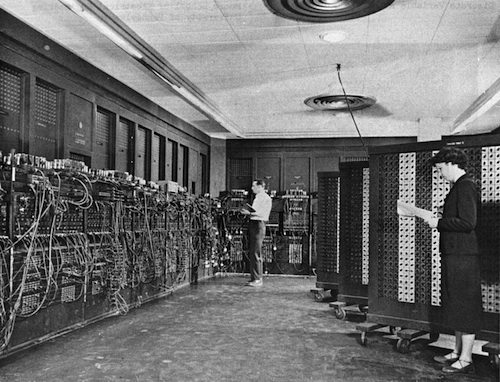

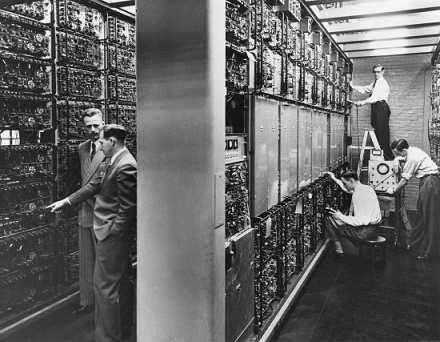

We started with the hardware, and quickly identified all was well with no faults or failures. Apart from hard drives, IT compute hardware can run for donkey's years. We've all heard stories of servers being inadvertantly being bricked up behind a wall only to be discovered years later still running perfectly well. My favourite is the Netware Server that ran for 16 years without a hitch. Luckily our engineers have many years of experience with all manner of infrastructure hardware, so dealing with these older servers was a simple trip down memory lane. Unfortunately not the same could be said about the software. The database software version dated back to 1997. The vendor could not provide support, and it was proving difficult to find a DBA that had worked with that technology from 20 years ago. As luck would have it one of our work colleagues did happen to have the necessary experience, and together with our hardware engineers we were able to return the system to service. A lucky escape for our client we think, and an important lesson as well.

So what did we learn and what would we recommend for others needing to run and maintain such a system?

7 key recommendations for running an aged system

1) Ensure the system is properly documented.

If you are going to intentionally run a system on older hardware and software it is imperative that it's properly documented. As the likelihood of a glitch increases over time and people's memories fade, having the information that describes how the system is configured and all its components will make troubleshooting much easier.

2) Ensure you have a plan for supporting the hardware.

The easiest way to do this is to have a support agreement in place with the vendor or a specialist hardware support company. If this is not possibe or it's just too expensive, keep an adequate stock of spares. This should include those items that can and do fail over time, such as power supplies, hard drives, or any component that is mechanical in nature or subject to heat.

3) Ensure you have a plan for supporting the software.

As for hardware, consider some form of support agreement with the appropriate software vendors. You should also ensure that you have the correct software licences in place. Software licences aside, if it's not economically feasible, or if support is simply not available, then documentation is even more imperative. We would also suggest documenting who in the organisation has the skills or experience with the system who could be called upon if issues did arise in the future. We do suggest though that if skills are scarce, especially in the market place, that other options be considered, such as upgrading the software to a level that is supported by the vendor.

4) Consider the cloud.

Some if the risk of running an aged system can be mitigated by taking the old hardware out of the equation. Luckily the advent of the cloud in recent years gives us plenty of options for hosting workload. This is certainly worth exploring if you have any concerns at all about the viability of the hardware

5) Ensure you have a backup!

This is something that is often overlooked, especially if the data within the system does not change. If there is some form of hardware failure there is a good chance that the data may be lost or corrupted, especially if the failure is storage related. The most important thing of all is to have a recoverable copy of the data that has been tested and is known to be working.

6) Monitoring is a must

While the system may not be used for production it's still equally important to have it monitored. This is especially so when the likelihood of hardware failure is increased due to age. There are times when a hardware component such as a hard drive will show warning signs of imminent failure. Having some form pre-failure alerting will give you the chance to gracefully shut the system down before it fails to undertake a repair without the need of having to go through a lengthy recovery process.

7) Have a recovery plan!

Knowing what you are going to do if the unthinkable happens is vital. Ideally you will have tested this in some form to prove its viability. It may mean spinning up a server in the cloud or digging that spare server out of storage. Having a plan that can be followed can take the stress out of the situation as the focus will be on execution rather than the working out what to do if no plan existed. Again, ideally this recovery plan should be pre-approved by your business and IT stakeholders.

Expected Lifespans

As we said earlier, the $64,000 question is knowing when it's time to bite the bullet and perform a refresh. Without going into a long dissertation on Moore's Law and compute economics, there are a few simple rules of thumb that can be followed.

Criticality

If the availabilty of a system is paramount to your business, then regular hardware and software refreshes are a no brainer. Both hardware and software needs to be maintained in a vendor supported state.

Typically this type of system will have a lifespan of between 3-5 years, with the final year being used as the time when the hardware is refreshed. The timeline is largely dictated by the hardware vendors, as their warranty is usually 3 years, with an option to extend support for another 2. Anything beyond a total of 5 years need to be negotiated on a case by case basis, but the fact is that anything beyond 5 years is living on borrowed time.

Performance

The workload demands on a system will also determine to some extent its useful lifespan. Depending on how a system scales, some systems may only have a lifespan of 18 months. under these circumstances it's often cheaper to replace the hardware with something that's newer faster. As with criticality, these sorts of scenarios are a no brainer when it comes to determining when a hardware refresh is due.

Purpose

The purpose or use of the system may change over time, where it goes from being a front-line asset used by the business to something less critical, such as an archival system or is needed for future ad-hoc queries and reports. It's these sorts of systems where uptime is not critical where it becomes difficult to determine when it's time to refresh. If there is a planned end date for the system and it just needs to hold together until then, following the previously mentioned rules should help. If he end is less clear, then it becomes a trade-off between the costs for support and maintenance vs the cost of a hardware and software refresh. There is no reason however, that with quality hardware, a system such as this could run for at least 10 years. The rule of thumb for IT hardware we used was 3 years in production followed by 2 years in dev/test (not UAT) followed by a period of non-critical workload or as lab kit if needed.

Deciding when to refresh - 5 warning signs to look out for

It's time to consider a refresh of either the hardware or the software when:

1) Hardware failures, such as hard drives start to become more frequent.

2) Your internal skills have been lost or there is reduced confidence that the system can be recovered if there is an issue

3) When the system is no longer supported by the vendor and the skills in the market place have become less common

4) When the cost of support and maintenance over the remaining life of the system makes it cost effective to undertake a refresh

5) When the recovery time of the system impacts the business to the point where it the risk and impact of failure outweighs the cost of a refresh

Summary

Maximising IT investment dollars is a sensible thing to do. While determining the lifespan for infrastructure technology can be a challenge, there are some rules of thumb which can be used to help. Provided it's been carefully thought through and the business understand the implications, there is nothing wrong in sweating those assets in order to maximise returns. Our message is:

Be sensible, manage the assets properly, and be open with the business about the risks and the benefits.